I needed a licensing comparison I'd written three months earlier. I remembered writing it. I could picture the headers, the conclusion, the table comparing three options. I just couldn't find it.

I had over 500 documents at that point. Architecture decisions, research notes, deployment runbooks, meeting summaries. All created with AI assistance over months of focused work. Each one made sense when I wrote it. Together they formed a collection I couldn't navigate.

In 2005, Thomas Limoncelli published a time management book for system administrators. His central analogy stuck with me: your brain is RAM. Fast, limited, volatile. Use a ticketing system as your hard drive. Write everything down so you can safely forget.

We took the advice. We built wikis, issue trackers, Notion workspaces, Obsidian vaults. Bigger and bigger hard drives. But nobody asked the follow-up question: where's the search engine?

Existing trackers have issues full of long technical comments. Architecture decisions, schema discussions, research findings buried in thread replies. Search is keyword-only. You search "authentication flow" and get nothing because the comment said "login sequence." You attach a screenshot of an error and it becomes invisible. Knowledge that exists in the system but can't be retrieved.

The problem isn't that we don't write things down. We do, more than ever. The problem is getting it back out.

Write everything down

Stage one was Limoncelli's prescription. A document for every decision, every finding. At 20 documents this works. At 50, you start forgetting what you already covered. At 100, you spend more time searching your own notes than writing new ones. At 500, the collection is a graveyard of good intentions.

So I automated the structure. I instructed my AI assistant to create YAML metadata for every document. Compressed summary, tags, project name, date, status, estimated token count. The AI maintained these automatically as content changed.

Then I went further. I built a manifest system. A root _manifest.yaml file that listed every project and its document count, and a per-project manifest with each document's summary, tags, dependencies, and token estimate. The idea was a table of contents for the AI: read the 200-token manifest before grepping through 50,000 tokens of actual content. Save time, save money.

The structure looked something like this (project names changed, but the shape is real):

# _manifest.yaml (root)

total_documents: 738

sections:

- section: projects/portfolio-tracker

document_count: 6

types: [architecture, research]

- section: projects/speech-api

document_count: 10

types: [guide, reference]

- section: projects/seo-toolkit

document_count: 6

types: [research]

- section: servers

document_count: 5

types: [server-config]Each project directory had its own manifest with per-document details:

# projects/portfolio-tracker/_manifest.yaml

documents:

- path: billing-design.md

summary: Subscription billing with prorated upgrades

token_estimate: 4237

depends_on: [investment-memo]

- path: competitive-landscape.md

summary: Market analysis after two competitors shut down

token_estimate: 4505

- path: feature-ideas.md

summary: AI-powered alerts, screener, multi-source data

token_estimate: 2863

depends_on: [competitive-landscape, investment-memo]Each document also had its own YAML frontmatter so the AI could read the header and decide whether to continue:

# projects/portfolio-tracker/billing-design.md

---

id: pt-billing-design

project: portfolio-tracker

type: architecture

status: active

summary: Subscription billing: calendar-month cycles,

prorated upgrades, payment gateway integration

tags: [billing, subscriptions, payments]

token_estimate: 4237

---

# Feature ideas

*Date: 2026-02-18*

*Status: Draft specification*

*Related: [investment-memo.md](investment-memo.md),

[competitive-landscape.md](competitive-landscape.md),

[monetization-brief.md](monetization-brief.md)*The AI would read the frontmatter, see the summary and tags, and skip the document if it wasn't relevant. The related links at the top connected documents into a web — an investment memo linked to the competitive landscape, which linked to the feature spec, which linked back to the monetization brief. I was building a wiki out of markdown files and YAML headers, with a script to keep the cross-references in sync.

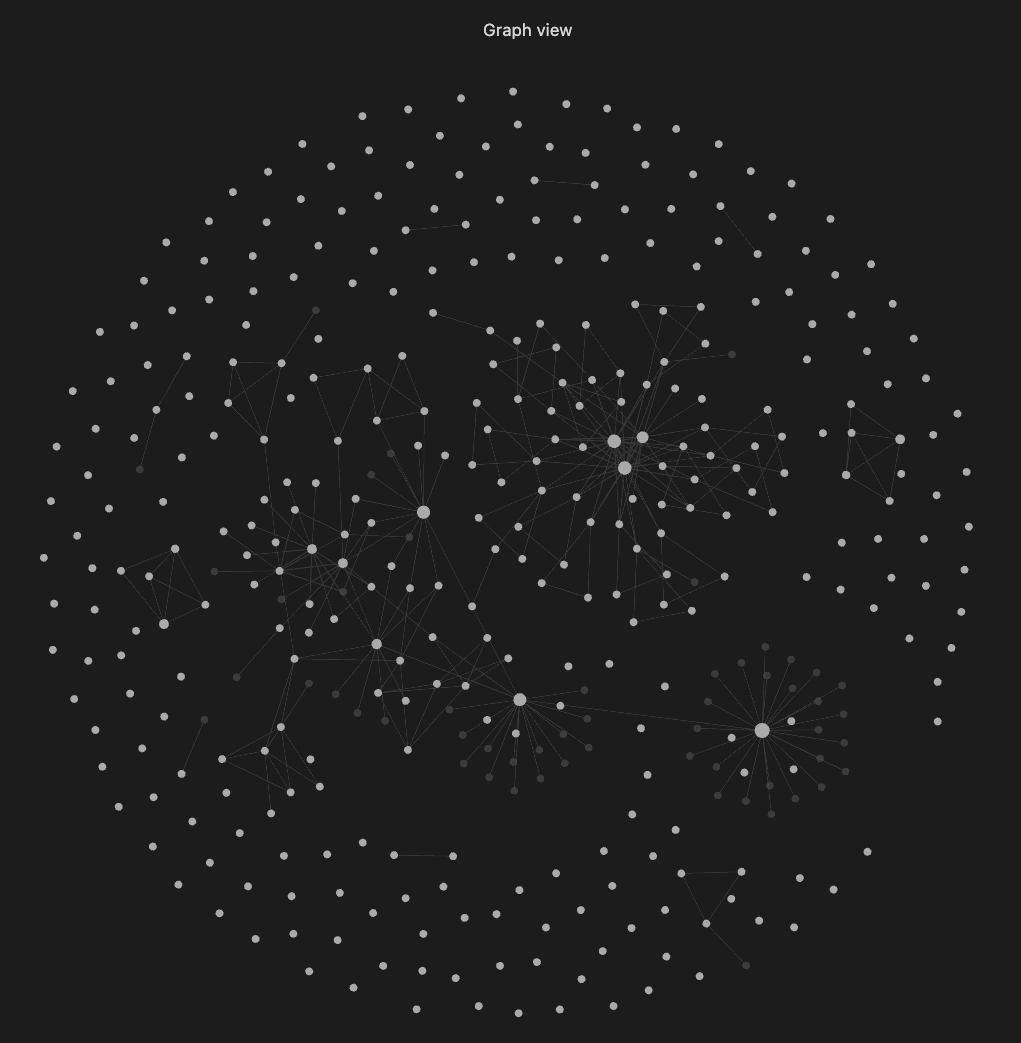

At some point I stepped back and looked at what I'd built. Metadata fields. Cross-linked documents. Version tracking through Git. A manifest index. Status tags. It was a project tracker and a wiki, held together with scripts and YAML. It covered everything from side projects (a bond screener idea, an API wrapper) to infrastructure docs and research notes. I wrote a script to regenerate the manifests automatically and added instructions so the AI would read the manifest first, decide which documents were relevant, and only then read the full content.

For a while this worked remarkably well. I could filter by project, by type, by tags I half-remembered. The AI could scan the manifests in seconds and skip straight to the documents it needed. But it was still keyword search underneath. The manifest told the AI where things were. It couldn't tell it what things meant. When I searched for "pricing strategy," it didn't find the monetization brief because the summary said "freemium subscription model." Same concept, different words. The manifest made navigation efficient. It didn't make retrieval smart.

Then I started collaborating with a friend on one project. Not a developer. I told him I'd handle the metadata, the structure, all the tooling. He'd just write. We picked Obsidian and Git for sync.

It broke immediately. Not the writing. The syncing. Every change needed a commit. He'd edit a file, I'd edit the metadata, we'd both push. Merge conflict. For someone who isn't technical, a Git merge conflict is a wall. HEAD, <<<<<<<, >>>>>>>. He shouldn't have to know what those mean.

Scale that to three or four people. A small team, a tiny company. Everyone needs to write, everyone needs to find things, nobody wants to learn Git. Within weeks, people stop committing. Then they stop writing. The knowledge goes back into their heads. Right where Limoncelli said to get it out of.

Solo tools don't become team tools by adding version control. The jump from "works for me" to "works for us" needs different architecture entirely.

Hacking an existing tracker

Markdown and Git failed for collaboration. The obvious next step: an existing open-source project tracker. Web interface, multiple users, no merge conflicts.

I wrote a Python CLI so my AI assistant could interact with the tracker. Create issues, update statuses, add comments. I gave the AI its own user account so the activity log showed what the human did versus what the AI did. That worked.

Then I needed custom metadata on issues. Project phase, component, commit hashes, branches. The tracker didn't support arbitrary fields. So I stuffed YAML frontmatter inside issue descriptions. Then built filtering logic on top. Hack on hack.

Comments became important too. Long technical discussions, architecture decisions buried in threads. Search needed to cover those. Then the wiki. Documents too long for the AI's context window needed chunking and summarization.

The CLI had a deeper problem. Every AI command needed explicit approval. Yes, no, yes, no, over and over. Allow everything automatically? Now you've given an AI unrestricted shell access. MCP fixed the approval problem with structured tools instead of raw commands. But MCP permissions live on the client side. If the client is misconfigured, the permissions are meaningless. So I built a permission layer on the server: the tracker itself decides what each agent can do, regardless of which client is calling. The permissions live where the data lives.

Versioning was another gap. With files, even if the AI commits automatically, humans forget. The AI overwrites something and the previous version is gone. No diff, no history, no way back.

I looked at this pile of workarounds and realized I wasn't extending a tracker. I was building a different product on top of something that resisted me at every step. Time to stop hacking and start building.

Search that understands meaning

Keyword search fails because humans aren't consistent. You wrote "auth flow" in January, "login sequence" in March, "SSO integration" in June. Same concept, three different phrases. Keyword search treats them as unrelated.

Hybrid search fixes this. Vector embeddings capture semantic meaning. Full-text search handles exact terms. Combine them and "how does authentication work" finds all three documents, ranked by relevance. Add metadata filtering: narrow by project, component, date range, any custom field. The search knows what things mean, not just what words they contain.

Then the last piece. AI agents that query the knowledge base directly. You don't search. The agent does. And it's efficient: instead of pulling a full 10,000-word page into context, the system returns a summary with section headings. The agent requests only the section it needs. A query that would burn 15,000 tokens uses 600. That's 96% less, which translates directly to lower API costs and more room in the context window for actual work.

Every edit gets versioned automatically. Human or AI, browser or API. Full diffs between any two versions, one-click revert. Nobody has to remember to commit.

The missing layer

Limoncelli's analogy, taken to its conclusion:

Your brain is RAM. We knew that.

The ticketing system is the hard drive. We built that.

Hybrid search is the search engine. It was never part of the tracker.

AI agents with knowledge base access are the DMA controller (hardware that reads from disk without going through the CPU). We're building that now.

We spent twenty years making bigger hard drives. More docs, more wikis, more Notion pages. The retrieval layer was never there. The knowledge exists. It's inaccessible.

So I built something

A self-hosted platform where search understands meaning, metadata is structured, versioning is automatic, permissions are enforced server-side, and AI agents can query the knowledge base without eating your context window.

It's called Specivo. Open source, no per-seat pricing.

The hard drive finally has a search engine.